Delta Lake vs Data Lake

By Christian Prokopp on 2022-11-02

Should you switch your Data Lake to a Delta Lake? At first glance, Delta Lakes offer benefits and features like ACID transactions. But at what cost?

Data Lake

The Data Lake traces its origin back to the heyday of Hadoop and the emergence of cloud computing. The idea was intriguing. Store all of your raw data, e.g. log files, CSV, JSON, and so forth, on commodity scale-out storage and compute and make sense of it at read time, for example, with MapReduce.

That was great for emerging schema, scalable write, and utilising comparatively inexpensive computing compared to Oracle or similar. However, MapReduce is expensive in engineering time and excludes most users from directly using the data.

Innovations like Apache Hive and Apache Parquet addressed some of these shortcomings. Hive provides familiar SQL interfaces to the data, and Parquet makes storing and retrieving data more efficient. A key aspect of Data Lakes remained the same. They usually are append-only and optimised for large-scale processing making small data retrieval slow compared to RDBMS or indexed NoSQL stores.

Delta Lake

Delta Lake offers several improvements intended to make it more usable for users and in more scenarios than a Data Lake. It sometimes is framed as an additional layer on top or an evolution of the Data Lake. However, as expert readers might note, some features and improvements made their way into the ever-evolving Data Lake ecosystems or could be achieved with effort, making the lines between them blurry.

Something most RDBMS users miss in a Data Lake are ACID transactions which Delta Lake provides as a powerful feature, including the ability to version and time travel back and forth between versions of the data. Delta Lake improves metadata management with more scalability, consistency, and flexibility. It also integrates well with batching and streaming with SQL, Python, Scala and Java APIs.

Ideally, Delta Lakes offer more usability to engineers and savvy data end-users, reducing or eliminating the need for landing data aggregates in specialised stores for use cases with differing access patterns and data reconciliation from multiple stores.

Data Lake vs Delta Lake

While Delta Lake provides significant improvements and features, it comes at a cost. Firstly, Delta Lakes are more expensive in money, time, infrastructure and complexity than Data Lakes. Data Lakes and technology like Hive, Trino and Athena are cost-efficient for their ideal use cases. AWS Athena, for example, is priced at $5 per TB scanned, which can go a long way with efficient Parquet storage.

You should only consider a Delta Lake if a Data Lake proves insufficient. The connectivity and integration option of Delta Lakes allows this as an evolution. Upgrading to a Delta Lake from a Data Lake when needed is a viable option. Use Hive, Athena, Trino, Flink or a similar Data Lake if it works for your use case, especially when you are cost sensitive.

Secondly, the underlying technologies and the architecture of Delta Lakes dictate constraints. Limits are natural when you run on distributed computing and storage. You can lessen them with cutting-edge and expensive machines, local storage, or optimise and tune things in private clouds. But you will not beat systems built for a purpose. Do not expect to outperform RDBMS in their domain or specialised NoSQL stores in theirs.

Depending on the use case, you could choose a simple Data Lake and optimised storage. However, Delta Lakes can be an appealing option where performance expectations allow some flexibility to address multiple access patterns and needs in one architecture.

Update

AWS released Athena Spark at re:invent 2022. Is Athena Spark a Delta Lake alternative to Databricks?

Christian Prokopp, PhD, is an experienced data and AI advisor and founder who has worked with Cloud Computing, Data and AI for decades, from hands-on engineering in startups to senior executive positions in global corporations. You can contact him at christian@bolddata.biz for inquiries.

Related Posts

How many words are 128k tokens?

2024-04-12

128k tokens are 96k words in English for ChatGPT 3.5 and 4. The ratio is estimated to be 0.75 words per token. However, the answer is not straightf...

Free Amazon Product and Bestseller Data

2024-04-11

Today, we release a massive dataset for non-commercial use, i.e. research or personal projects. The dataset covers Amazon product data for all of 2...

Javascript TDD with ChatGPT

2023-04-05

Test-driven development in Javascript with ChatGPT-4 works. An example demonstrates it using a precise description and refined prompt engineering.

Is Athena Spark a Delta Lake alternative to Databricks?

2022-12-02

Finally. AWS re:Invent 2022 brought the answer to both Databricks and Athena's worst limitations. Athena Spark promises to bring Delta Lake scale-o...

4 career tips I wish I knew

2022-05-25

When I mentor university students or discuss careers with the people I lead, I often draw from four pieces of advice. I wish I had known these when...

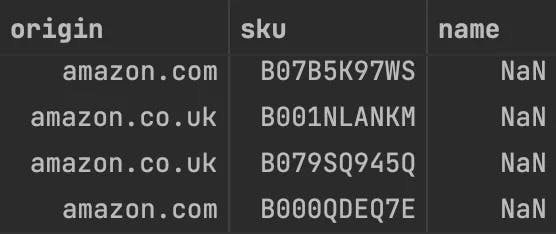

Bad Data: Nameless Amazon bestsellers

2022-05-03

Many Amazon marketplace customers know that its huge product catalogue has data quality issues. However, they might expect its top sellers, which t...