One simple thing companies miss about their Data

By Christian Prokopp on 2022-08-08

There is one simple thing most companies miss about their data. It has been instrumental in my work as a data professional ever since.

Regarding data, we regularly cannot see the wood for the trees. It happened to me when I worked at a startup a decade ago when the brilliant Ted Dunning visited us. We did the horse and pony show to impress him with the cool stuff we built. He listened, and the comment that stuck with me was, "all the successful startups I have seen measure themselves".

Know thyself

It was powerful. Ted did not say much about what we did wrong or what we should do. At least not directly, but we all got the message, and it hit home.

We were too comfortable with our work, i.e. we knew every line of code and datastore. But humans are terrible at looking at data and finding patterns without some (graphical) reporting. I mined millions and millions of products daily but had only basic observability built in because I knew every process and system involved. Yet that is not good enough and not sustainable.

We heard Ted loud and clear. We started collecting and visualising operational data and metadata to help us manage our platform and processes. Eventually, we exited to Google, so things did work out.

Define and measure data quality and processing

The one simple thing most companies miss on their data is measuring and measuring the right thing, which is tough. Many companies use data to measure (other) things, but few measure the data itself! The data quality, processes and metadata, i.e. is the data you use trustworthy? What are the trends regarding data quality, size, speed, etc.?

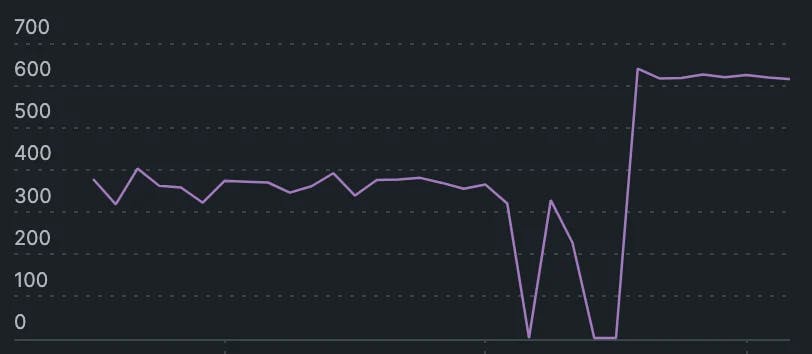

Since Ted's memorable visit, I have developed this further in every role. It keeps paying dividends. Recently, for example, for a good week, I have been trying to figure out a weird shape in the curve measuring some operational metrics on processing throughput and queuing in my staging data platform at Bold Data. The platform's overall performance was good, and so was the data quality. Still, it looked wonky with jumps where there should be smooth lines. After extensive digging and thinking, I figured out an overlapping, interfering behaviour between multiple data mining processes that only occurs under specific circumstances that could impact subtly but significantly the SLA and cost in the long term. Something hard to predict or test for proactively or even detect later on.

Do not trust what you have not checked yourself

In previous roles, I always used a two-pronged approach when I had to deal with legacy systems and data. Quality and quantity, i.e. manually dive into the raw data and systems to understand what is what and automate metrics at a larger scale. Firstly, do not believe ancient data dictionary documents, third-party consultants, or system integrators with underpaid and overworked staff.

I found plenty of cautionary tales. There were innocent columns in tables containing PII data because of a broken process or a user manually entered it in the wrong field. Debug logs that spilt sensitive financial data from secured processes into unsecured areas. Unicode characters break ancient SQL and processing scripts, leading to strange behaviour. Data synchronisation processes missed a few financial records every time they ran. Just small enough to slip through as reconciliation errors for the finance department to deal with but monetary errors nonetheless. Or the supply chain system that dropped records when overloaded and never reconciled them with the source system, mysteriously losing packages.

Secondly, start measuring things. It can be simple, like how many records go in and come out of a pipeline. What defines good for a field, the ratio of good vs bad, and how does it change over time? Look at basic stats, e.g. NULL might be acceptable in your schema, but is it acceptable that all records are NULL? How long do processes take? How are they interconnected? You get the idea.

"We do not know" is not good enough

The interesting part is that in the examples I mentioned, the risk either was not known or realised, e.g. leaking financial data. Or the error was small enough that people and processes started to work around it, e.g. expect losing X% of shipped packages or Y% of monetary error. People started accepting that these systems and processes are too complex and demanding to fix instead of addressing the issues.

However, processes are deterministic in an organisation. They may not be understood because they are complex or not measured, but they have no magic. Not knowing why things go wrong should be an alarm bell. You may ignore an issue because it is economical to do so but not measuring and understanding it is dangerous.

My version of Ted's advice today is "Companies that do well measure, understand and can trust their data all the way from origin to consumption".

Christian Prokopp, PhD, is an experienced data and AI advisor and founder who has worked with Cloud Computing, Data and AI for decades, from hands-on engineering in startups to senior executive positions in global corporations. You can contact him at christian@bolddata.biz for inquiries.

Related Posts

How many words are 128k tokens?

2024-04-12

128k tokens are 96k words in English for ChatGPT 3.5 and 4. The ratio is estimated to be 0.75 words per token. However, the answer is not straightf...

Free Amazon Product and Bestseller Data

2024-04-11

Today, we release a massive dataset for non-commercial use, i.e. research or personal projects. The dataset covers Amazon product data for all of 2...

Introducing Tax Shrink

2024-03-14

Tax Shrink is a new online tool that helps owner-operators of Limited companies in the UK calculate and visualise the ideal salary-to-dividend rati...

Llamar.ai: A deep dive into the (in)feasibility of RAG with LLMs

2023-09-27

Over four months, I created a working retrieval-augmented generation (RAG) product prototype for a sizeable potential customer using a Large-Langua...

Faster and Cheaper: ARM Graviton vs Intel and AMD x86 AWS EC2

2023-01-20

How Bold Data achieved an astonishing 2.3x improvement by switching from x86 to ARM.

A poem about Data

2022-12-05

Data is the root of all my worries ...